The global competition in the field of artificial intelligence [AI] has been intensifying, with numerous prominent tech companies joining the fray. Now Amazon has committed to invest, as much as $4 billion in the AI startup Anthropic.

Initially, Amazon will pour in $1.25 billion. This comes with the flexibility to potentially increase its investment to a maximum of $4 billion. The e-commerce giant will have a minority stake in the firm. For Anthropic, this not only brings increased financial support from Amazon but also grants access to the computational capabilities of Amazon Web Services, a critical resource for advancing AI model development.

Unpacking the Amazon-Anthropic Deal: What You Need to Know

It should be noted that Anthropic has been utilizing AWS services since 2021. Under the terms of the investment arrangement, Anthropic has selected Amazon’s AWS as its primary cloud service provider for critical tasks. This entails safety research and the development of upcoming foundation models, according to the e-commerce company. In addition, Anthropic will harness AWS Trainium and Inferentia chips. This is for the construction, training, and deployment of its forthcoming foundation models. Andy Jassy, Amazon’s chief executive, further added,

“Customers are quite excited about Amazon Bedrock, AWS’s new managed service that enables companies to use various foundation models to build generative AI applications on top of, as well as AWS Trainium, AWS’s AI training chip, and our collaboration with Anthropic should help customers get even more value from these two capabilities.”

Also Read: Can Meta’s Upcoming AI Model Take Down OpenAI?

Is Amazon late to the AI party?

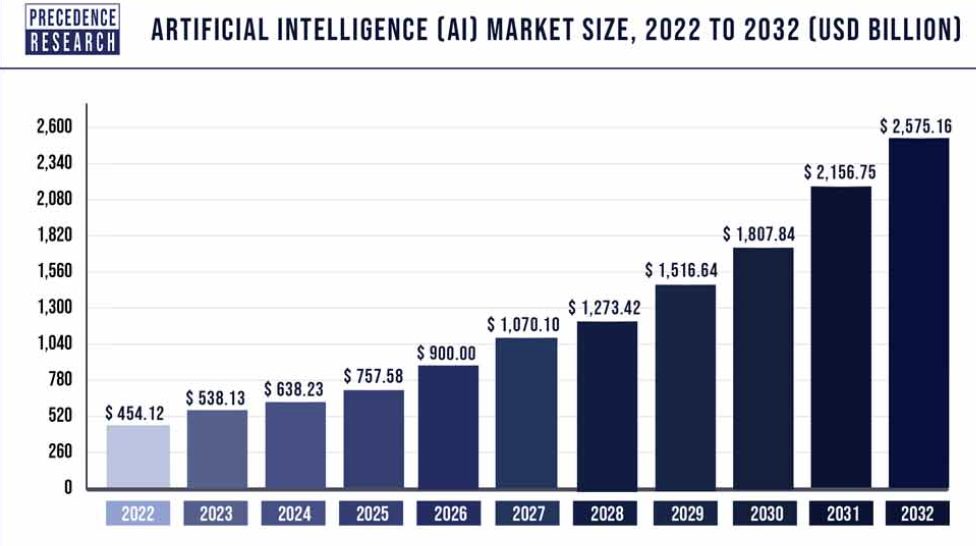

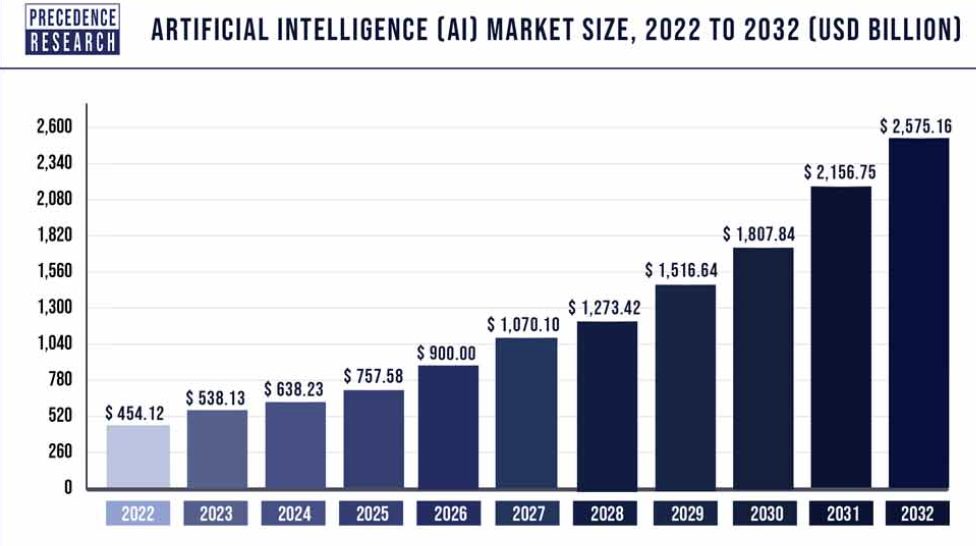

The worldwide AI market was valued at $454.12 billion in 2022. It is projected to reach about $2,575.16 billion by 2032, experiencing a compound annual growth rate of 19% from 2023 to 2032.

Dario Amodei, the CEO and co-founder of Anthropic, has committed to offering Amazon Web Services customers worldwide access to upcoming iterations of its foundational models through Amazon Bedrock on a “long-term” basis. He said,

“The last 10 years, there’s been this remarkable increase in the scale that we’ve used to train neural nets and we keep scaling them up, and they keep working better and better. That’s the basis of my feeling that what we’re going to see in the next 2, 3, 4 years… what we see today is going to pale in comparison to that.”

Also Read: Apple to Develop its Own OpenAI ChatGPT Rival